Deterministic Guardrails for AI System

Click to view full size

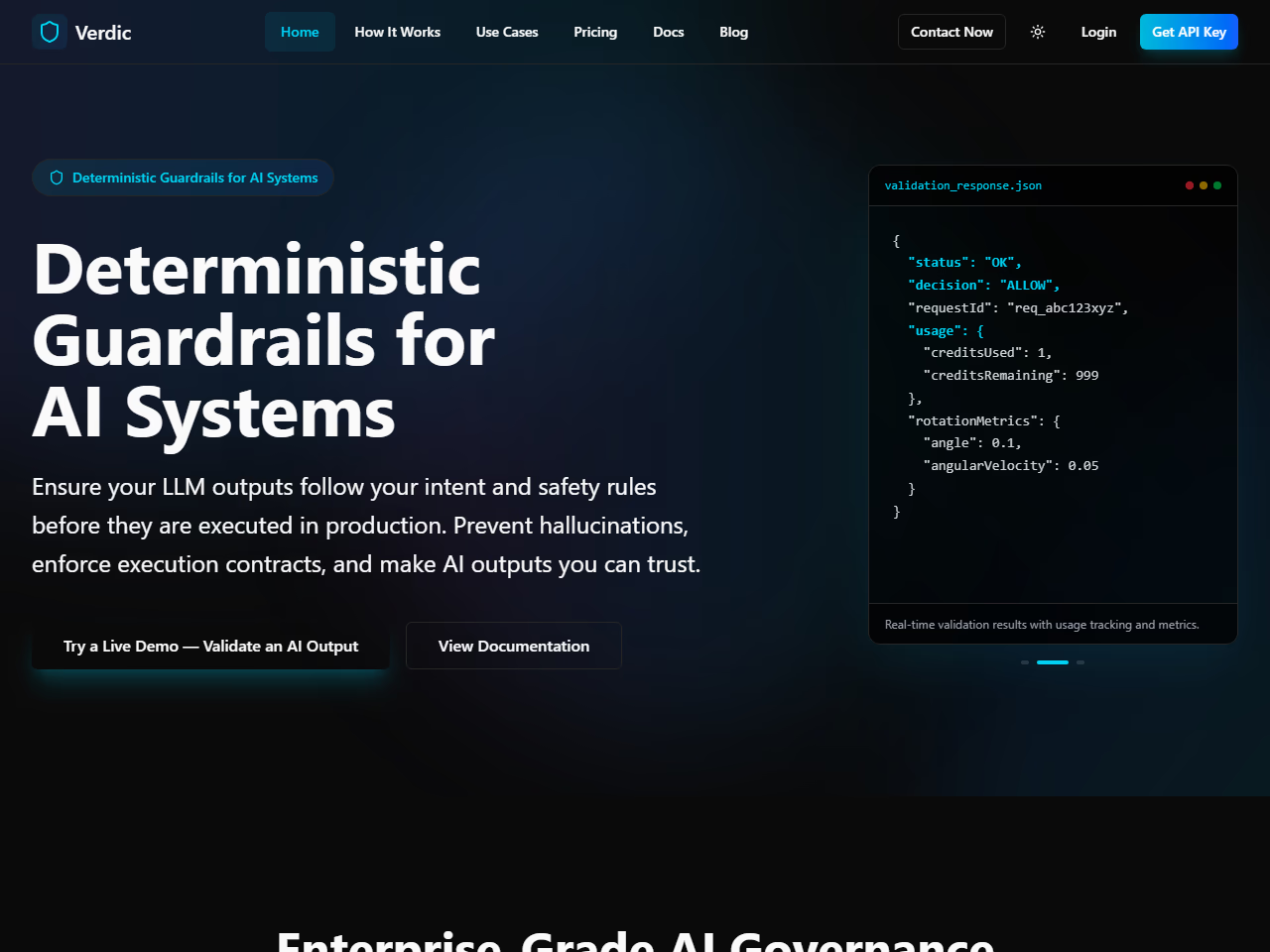

Click to view full sizeVerdic Guard provides deterministic AI governance and validation for large language models (LLMs) to ensure compliance, reliability, and predictability in production environments. The platform helps prevent hallucinations by enforcing strict execution contracts, ensuring that AI outputs align with specified intents, safety requirements, and output formats. Designed for teams deploying production AI applications, Verdic Guard supports enterprise-grade governance by implementing safety constraints and compliance policies across AI infrastructure. It offers features like anti-hallucination measures, task alignment enforcement, modality constraints, and execution deviation risk analysis. These capabilities help manage and monitor AI behavior, providing alerts on any deviations and allowing for safe failure modes to maintain user experience. Businesses such as healthcare, financial services, and legal tech utilize Verdic Guard to confidently deploy AI systems that are safe, compliant, and trustworthy.

LLM outputs can hallucinate or break compliance in production

Validates outputs vs intent/contracts; allows, downgrades or blocks

Teams shipping production LLM applications needing reliability

Add a comment...